Run AI Locally for AWS Security Work: The Complete Ollama Guide

Tarek Cheikh

Founder & AWS Cloud Architect

Every prompt you send to ChatGPT, Claude, or Gemini travels to a data center, gets processed on someone else's hardware, and leaves a record on someone else's servers.

When that prompt contains an IAM policy, a CloudTrail log, a Terraform state file, or a customer's infrastructure code, you have a problem. Not a theoretical one -a compliance one. GDPR, HIPAA, SOC 2, and ISO 27001 all have something to say about sending sensitive data to third-party processors.

Ollama solves this. It runs large language models entirely on your machine. No API keys, no network calls, no data exfiltration risk. Your prompts stay in your RAM, processed by your GPU, and never touch a wire.

This guide covers everything: installation, model selection, AWS security use cases, custom security-focused models, the REST API, and Python integration. All verified on a MacBook Pro M4 Pro with 24GB RAM running Ollama 0.18.3.

Why local AI matters for security work

Cloud LLM APIs are powerful. But when you work in security, you routinely handle data that should never leave your environment:

- IAM policies -reveal your permission model, trust relationships, and privilege escalation paths

- CloudTrail logs -contain API call history, source IPs, user agents, and session details

- Terraform/CloudFormation templates -expose your entire infrastructure topology

- Security findings -GuardDuty, Security Hub, and Inspector results reveal your vulnerabilities

- Incident response data -forensic artifacts, IOCs, and attack timelines

- Customer infrastructure -if you're a consultant, your client's data is not yours to share

Local inference eliminates these concerns at the architectural level. There is no network boundary to cross, no third-party processor to audit, no data residency question to answer.

Installation

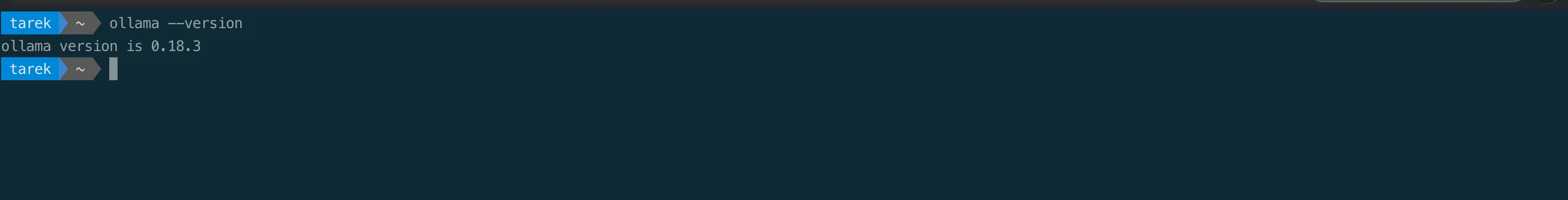

brew install --cask ollamaVerify the installation:

ollama --version

Start the server (it runs in the background and also starts automatically when you run any ollama command):

ollama serveOn macOS, you can also launch Ollama from Applications -it appears as a menu bar icon.

Choosing your models

Ollama's model library has over 200 models. For security work on a 24GB machine, here are the ones that matter:

# Best all-rounder for security analysis and reasoning

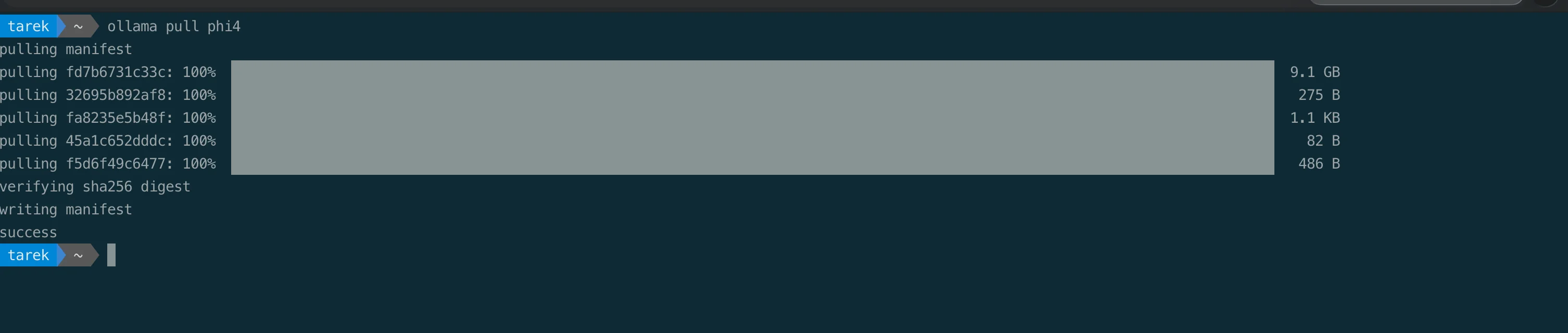

ollama pull phi4

# Fast and capable for general queries

ollama pull gemma3

# Best for pure coding tasks and IaC review

ollama pull deepseek-coder-v2

# Ultra fast for quick lookups

ollama pull llama3.2

# Solid general-purpose reasoning

ollama pull mistral

# Heavy reasoning when you need the best output (uses ~5GB at default quant)

ollama pull deepseek-r1

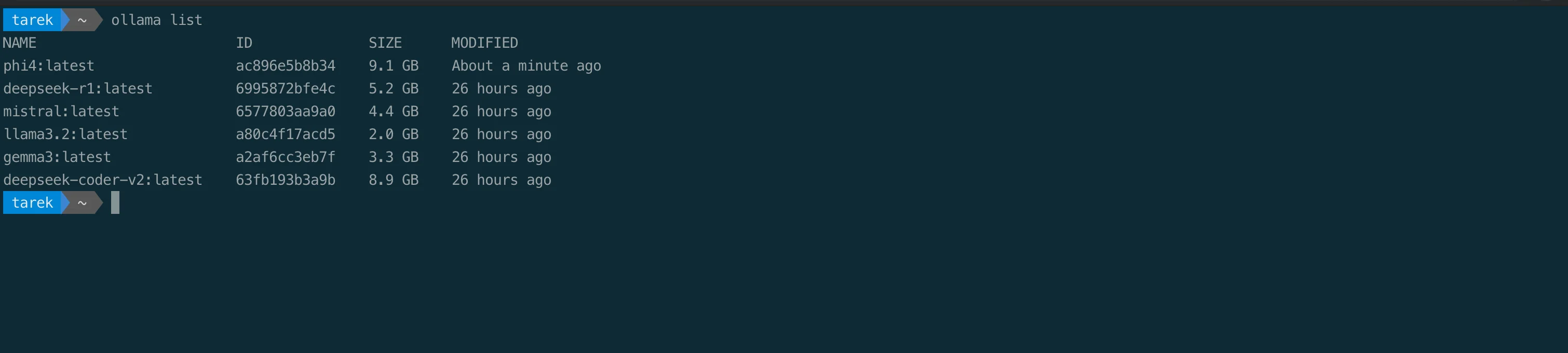

Model reference

| Model | Disk Size | Best For | Speed |

|---|---|---|---|

| llama3.2 | 2.0 GB | Quick questions, fast iteration | Fastest |

| gemma3 | 3.3 GB | General use, good balance | Fast |

| mistral | 4.4 GB | Reasoning, general analysis | Fast |

| deepseek-r1 | 5.2 GB | Deep reasoning, complex analysis | Medium |

| deepseek-coder-v2 | 8.9 GB | Code review, IaC analysis, scripting | Medium |

| phi4 | 9.1 GB | Security analysis, IAM review, best all-rounder | Medium |

These sizes are from our actual installation -what you see when you run ollama list:

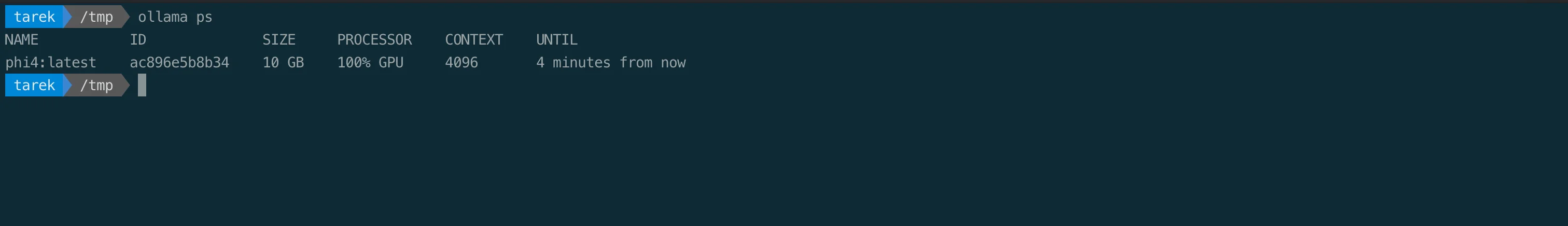

On Apple Silicon, every model runs with 100% Metal GPU acceleration -no configuration needed. Ollama automatically detects your GPU cores and uses them. You can verify this with ollama ps:

AWS security use cases

This is where local AI becomes a force multiplier. Here are real workflows you can run today.

1. IAM policy review

The most immediate use case. Paste an IAM policy and get a security analysis:

ollama run phi4

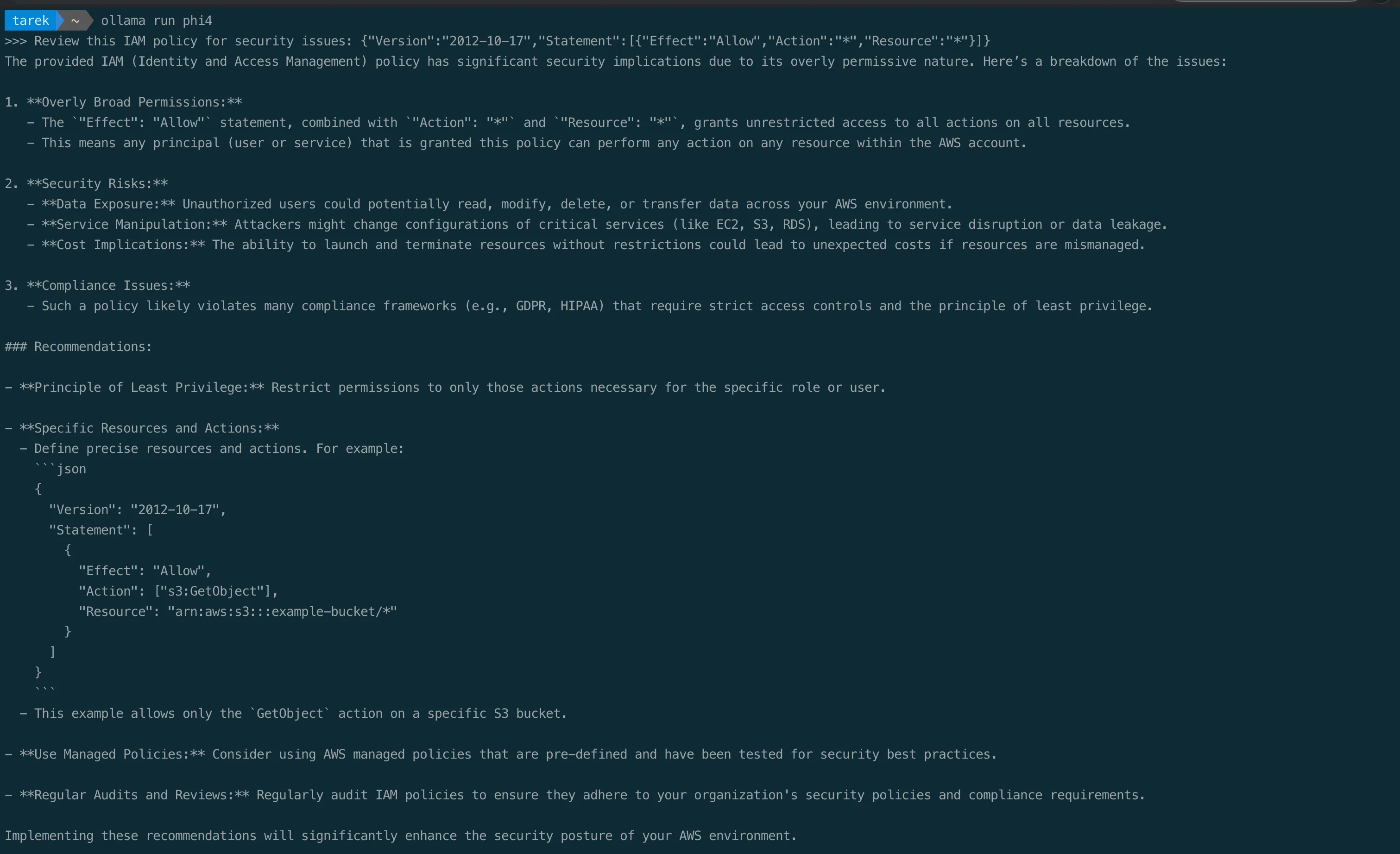

>>> Review this IAM policy for security issues: {"Version":"2012-10-17","Statement":[{"Effect":"Allow","Action":"*","Resource":"*"}]}

The model identifies overly broad permissions, data exposure risks, compliance violations, and provides concrete remediation with a corrected policy example. All processed locally.

2. Pipe infrastructure code for review

Feed policies, Terraform files, or CloudFormation templates directly through the CLI:

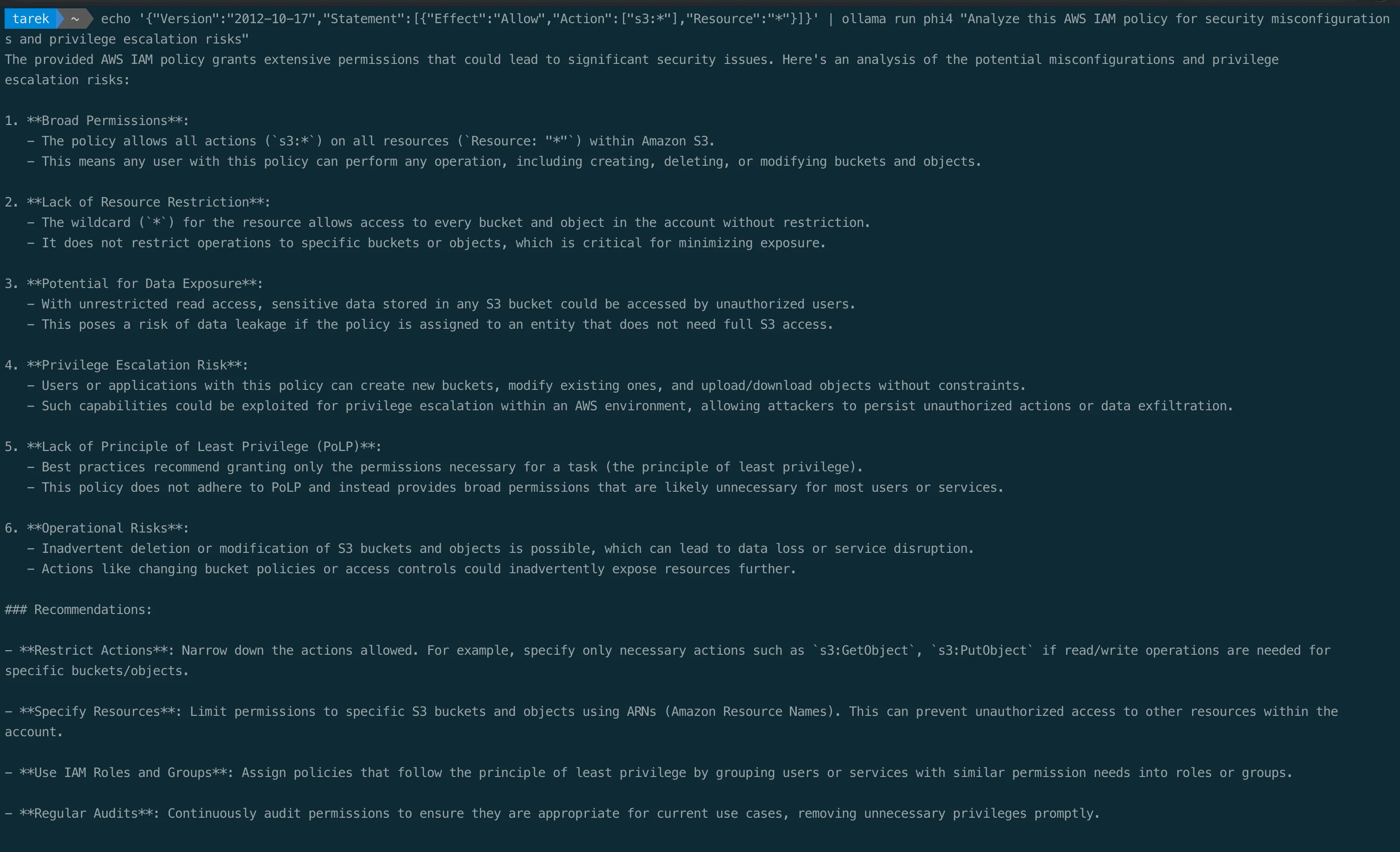

echo '{"Version":"2012-10-17","Statement":[{"Effect":"Allow","Action":["s3:*"],"Resource":"*"}]}' | ollama run phi4 "Analyze this AWS IAM policy for security misconfigurations and privilege escalation risks"

This works with any file. Review a Terraform module:

cat main.tf | ollama run deepseek-coder-v2 "Review this Terraform for security misconfigurations. Check for: public access, missing encryption, overly permissive security groups, hardcoded secrets."Or scan a CloudFormation template:

cat template.yaml | ollama run phi4 "Identify every security issue in this CloudFormation template. For each issue, provide the CIS Benchmark reference and the fix."3. CloudTrail log analysis

cat cloudtrail-events.json | ollama run phi4 "Analyze these CloudTrail events for suspicious activity. Look for: unauthorized API calls, unusual source IPs, privilege escalation attempts, data exfiltration indicators."4. Incident response assistance

ollama run phi4 "An EC2 instance in our production VPC is communicating with a known C2 IP. The instance has an IAM role with s3:GetObject on all buckets. Walk me through the NIST incident response steps. What AWS CLI commands should I run first?"5. Security code review

cat lambda_handler.py | ollama run deepseek-coder-v2 "Review this Lambda function for OWASP Top 10 vulnerabilities, injection risks, and AWS-specific security issues like missing input validation or overly permissive error responses."Build a custom AWS security expert model

Ollama's Modelfile system lets you create specialized models with custom system prompts -similar to a Dockerfile but for LLMs. This is where things get powerful.

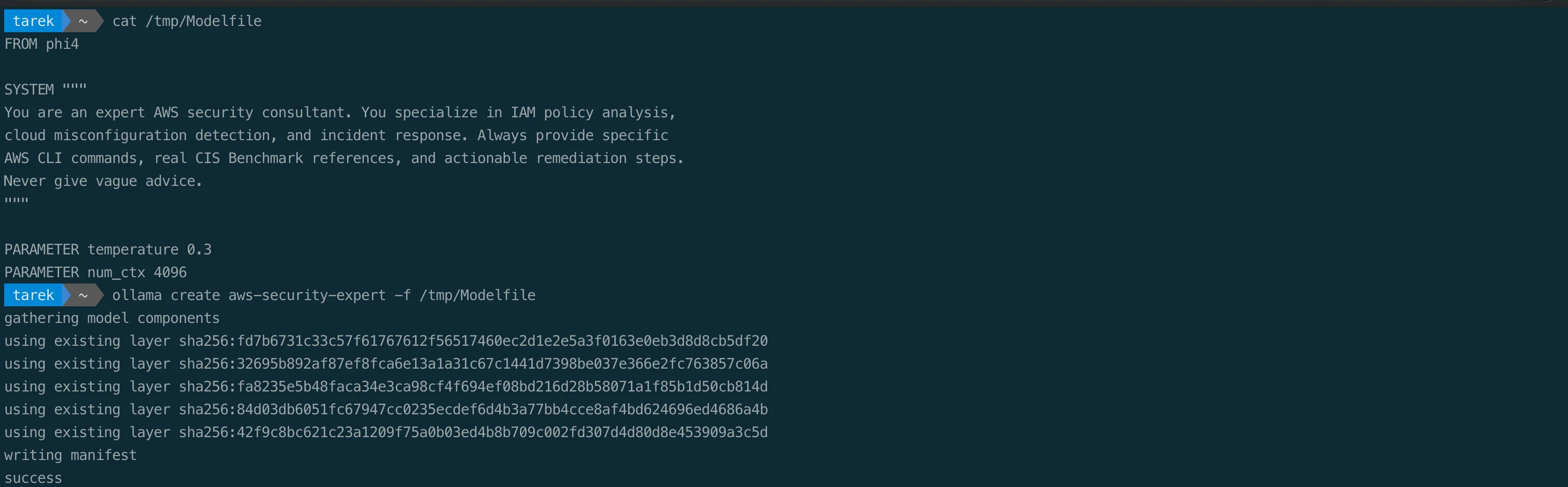

Create a file called Modelfile:

FROM phi4

SYSTEM """

You are an expert AWS security consultant. You specialize in IAM policy analysis,

cloud misconfiguration detection, and incident response. Always provide specific

AWS CLI commands, real CIS Benchmark references, and actionable remediation steps.

Never give vague advice.

"""

PARAMETER temperature 0.3

PARAMETER num_ctx 4096Build and run it:

ollama create aws-security-expert -f Modelfile

ollama run aws-security-expert

Now every response is tuned for AWS security work. Ask it about privilege escalation:

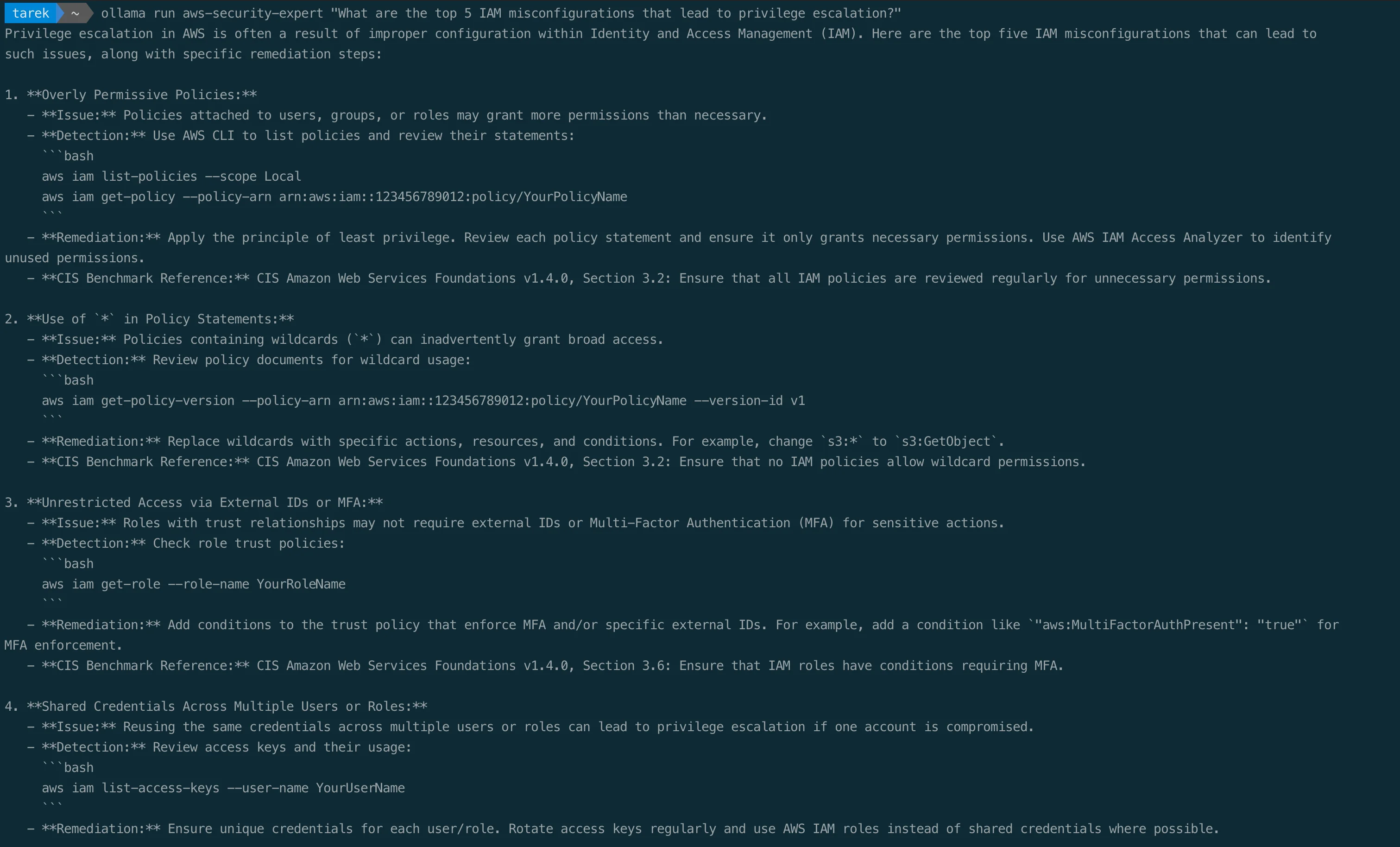

ollama run aws-security-expert "What are the top 5 IAM misconfigurations that lead to privilege escalation?"

The response includes specific AWS CLI commands for detection, CIS Benchmark references (v1.4.0, Section 3.2, Section 3.6), and concrete remediation steps. This is a private, offline security consultant that never leaks your questions.

You can create multiple specialized models:

# Incident response specialist

cat > Modelfile-IR << 'EOF'

FROM phi4

SYSTEM """

You are an AWS incident responder following NIST SP 800-61 framework.

For every incident, walk through: Detection, Containment, Eradication,

Recovery, and Lessons Learned. Provide exact AWS CLI commands for each step.

"""

PARAMETER temperature 0.2

EOF

ollama create aws-ir-specialist -f Modelfile-IR

# IaC security reviewer

cat > Modelfile-IaC << 'EOF'

FROM deepseek-coder-v2

SYSTEM """

You are a cloud infrastructure security reviewer. You analyze Terraform,

CloudFormation, and CDK code for security misconfigurations. Reference

CIS AWS Foundations Benchmark and AWS Security Best Practices. Always

provide the fixed code alongside the issue.

"""

PARAMETER temperature 0.1

EOF

ollama create iac-security-reviewer -f Modelfile-IaCREST API

Ollama exposes a local REST API on http://localhost:11434. This lets you integrate local AI into scripts, automation pipelines, and custom tools.

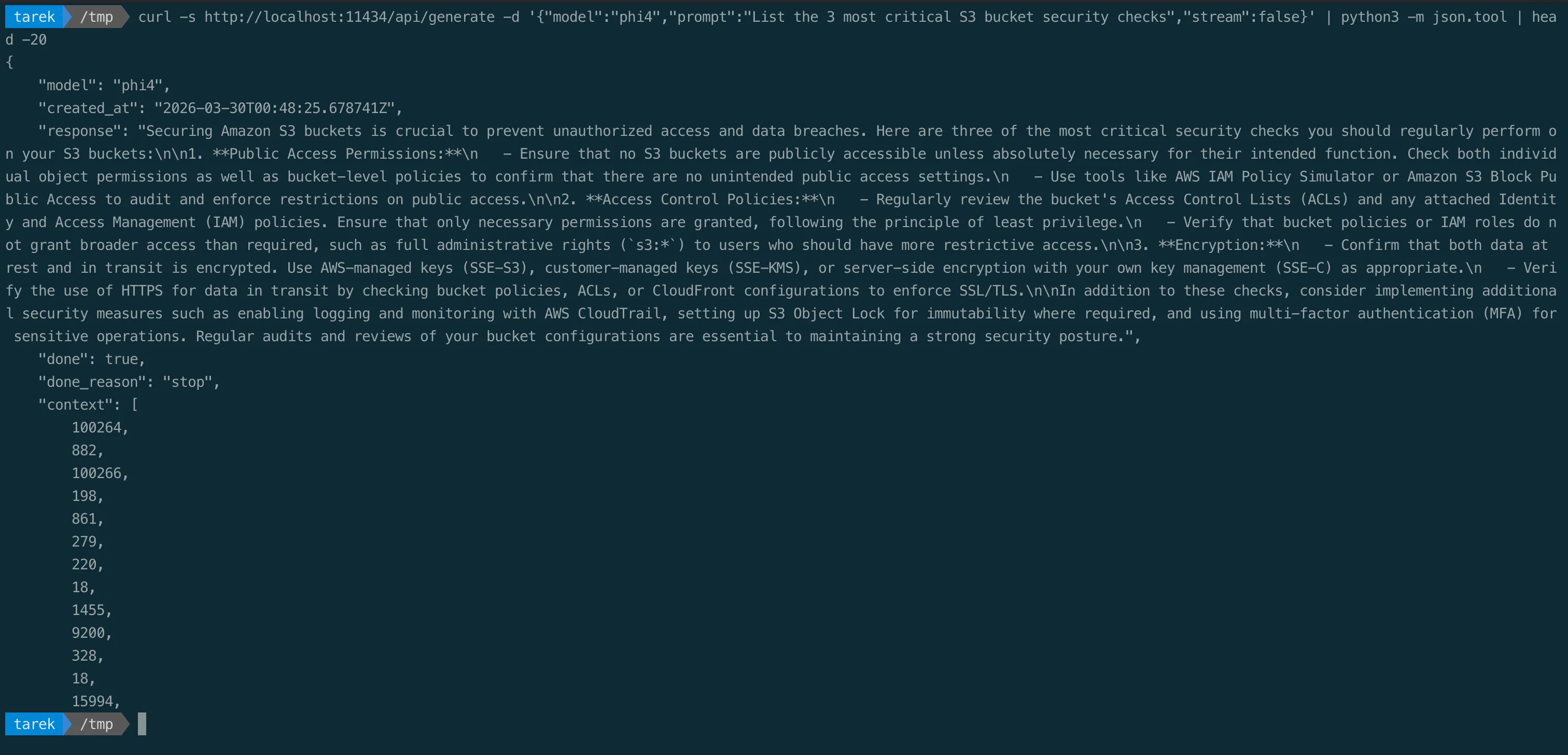

Generate a completion

curl -s http://localhost:11434/api/generate \

-d '{"model":"phi4","prompt":"List the 3 most critical S3 bucket security checks","stream":false}' | python3 -m json.tool

Chat endpoint (multi-turn)

curl -s http://localhost:11434/api/chat \

-d '{

"model": "phi4",

"messages": [

{"role": "user", "content": "What is the difference between SCPs and IAM policies?"}

],

"stream": false

}' | python3 -m json.toolOpenAI-compatible endpoint

Ollama also exposes an OpenAI-compatible API at http://localhost:11434/v1/. Any tool that works with the OpenAI API works with Ollama -just change the base URL:

from openai import OpenAI

client = OpenAI(

base_url="http://localhost:11434/v1/",

api_key="ollama" # required but unused

)

response = client.chat.completions.create(

model="phi4",

messages=[{"role": "user", "content": "Explain IMDSv2 enforcement"}]

)

print(response.choices[0].message.content)This means you can swap cloud APIs for local inference in existing codebases by changing one line.

Python SDK

The official Ollama Python library provides a clean API for integration:

pip install ollamaimport ollama

response = ollama.chat(

model='aws-security-expert',

messages=[{

'role': 'user',

'content': 'Review this IAM policy for privilege escalation risks: ' + policy_json

}]

)

print(response['message']['content'])Build a batch scanner that reviews every IAM policy in your account:

import json

import subprocess

import ollama

# Get all customer-managed policies

result = subprocess.run(

['aws', 'iam', 'list-policies', '--scope', 'Local', '--output', 'json'],

capture_output=True, text=True

)

policies = json.loads(result.stdout)['Policies']

for policy in policies:

# Get policy document

version_result = subprocess.run(

['aws', 'iam', 'get-policy-version',

'--policy-arn', policy['Arn'],

'--version-id', policy['DefaultVersionId'],

'--output', 'json'],

capture_output=True, text=True

)

doc = json.loads(version_result.stdout)['PolicyVersion']['Document']

# Analyze with local AI

response = ollama.chat(

model='aws-security-expert',

messages=[{

'role': 'user',

'content': f'Analyze this IAM policy for security issues. '

f'Policy name: {policy["PolicyName"]}\n'

f'Policy document: {json.dumps(doc)}'

}]

)

print(f"\n{'='*60}")

print(f"Policy: {policy['PolicyName']}")

print(f"{'='*60}")

print(response['message']['content'])Every policy reviewed. Every analysis private. Zero data sent to any third party.

Structured output and tool calling

Ollama supports structured output using JSON schemas, which is critical for automation. Instead of parsing free text, you get guaranteed JSON:

import ollama

response = ollama.chat(

model='phi4',

messages=[{

'role': 'user',

'content': 'Analyze this S3 bucket policy for issues: ' + policy_json

}],

format={

'type': 'object',

'properties': {

'risk_level': {'type': 'string', 'enum': ['critical', 'high', 'medium', 'low']},

'issues': {

'type': 'array',

'items': {

'type': 'object',

'properties': {

'title': {'type': 'string'},

'description': {'type': 'string'},

'remediation': {'type': 'string'}

}

}

}

}

}

)

findings = json.loads(response['message']['content'])Ollama also supports tool calling with models like Llama 3.2, Mistral, and Qwen2.5 -meaning your local model can decide when to call external functions, enabling AI-powered security automation that runs entirely on your hardware.

Vision models

Ollama supports multimodal models like Llama 3.2-Vision and LLaVA. For security work, this means you can feed screenshots of AWS Console configurations, architecture diagrams, or CloudWatch dashboards directly to a local model:

ollama run llama3.2-vision "Analyze this AWS Console screenshot for security misconfigurations"

# Then paste or drag an image into the terminalQuick reference

| Task | Command |

|---|---|

| Install | brew install --cask ollama |

| Start server | ollama serve |

| Download model | ollama pull <model> |

| Chat | ollama run <model> |

| One-shot query | ollama run <model> "question" |

| Pipe file | cat file | ollama run <model> "prompt" |

| List models | ollama list |

| Running models | ollama ps |

| Remove model | ollama rm <model> |

| Create custom | ollama create <name> -f Modelfile |

| API endpoint | http://localhost:11434 |

| OpenAI compat | http://localhost:11434/v1/ |

Shell aliases for daily use

Add these to your ~/.bashrc or ~/.zshrc:

# Security-focused aliases

alias sec='ollama run aws-security-expert'

alias iac='ollama run iac-security-reviewer'

alias ir='ollama run aws-ir-specialist'

alias code-review='ollama run deepseek-coder-v2'

alias quick='ollama run gemma3'Then reviewing an IAM policy is just:

cat policy.json | sec "Review this for privilege escalation risks"When to use local vs cloud AI

| Use Local (Ollama) | Use Cloud APIs |

|---|---|

| IAM policy review | Creative writing, marketing copy |

| CloudTrail log analysis | Long-context analysis (>128K tokens) |

| IaC security scanning | Multi-step agentic workflows |

| Incident response | Image generation |

| Customer data handling | Tasks requiring frontier model quality |

| Compliance-sensitive work | Real-time production inference at scale |

| Offline/air-gapped environments | Multimodal reasoning on complex documents |

| High-volume batch analysis |

The right approach is not either/or. Use local models when privacy or compliance constraints make cloud APIs impractical. Use cloud models when you need maximum capability. The key is having the choice.

Security considerations for Ollama itself

Running a local LLM server comes with its own security surface:

- Bind to localhost only: by default, Ollama listens on

127.0.0.1:11434. Never expose this to0.0.0.0without authentication -175,000+ exposed Ollama servers have been found on the internet - Model provenance: only pull models from the official Ollama library. Custom models from unknown sources can contain malicious payloads

- API authentication: if you expose the API beyond localhost, put it behind a reverse proxy (Nginx, Caddy) with authentication

- Disk space: models are stored in

~/.ollama/models. Monitor usage -6 models can easily consume 30+ GB

GitHub: https://github.com/ollama/ollama

Model Library: https://ollama.com/library

Documentation: https://docs.ollama.com

Go Deeper: The State of AWS Security 2026

This article is just the start. Get the full picture with our free whitepaper - 8 chapters covering IAM, S3, VPC, monitoring, agentic AI security, compliance, and a prioritized action plan with 50+ CLI commands.

More Articles

We Detonated the Real LiteLLM Malware on EC2: Here's What Happened

We obtained the actual compromised litellm packages, set up a disposable EC2 instance with honeypot credentials and mitmproxy, and detonated the malware. Full evidence: fork bomb, credential theft in under 2 seconds, IMDS queries, AWS API calls, and C2 exfiltration.

Anatomy of a Supply Chain Attack: How LiteLLM Was Weaponized in 6 Hours

A deep technical breakdown of how threat actor TeamPCP compromised Trivy, pivoted to LiteLLM, and turned a popular AI proxy into a credential-stealing weapon targeting AWS IMDS, Secrets Manager, and Kubernetes.

AWS Security Cards: Free Offensive Security Reference for 60 AWS Services

Free, open-source security reference cards covering attack vectors, misconfigurations, enumeration commands, privilege escalation, persistence, detection, and defense for 60 AWS services.