Amazon S3 Deep Dive: Object Storage from Basics to Production

Tarek Cheikh

Founder & AWS Cloud Architect

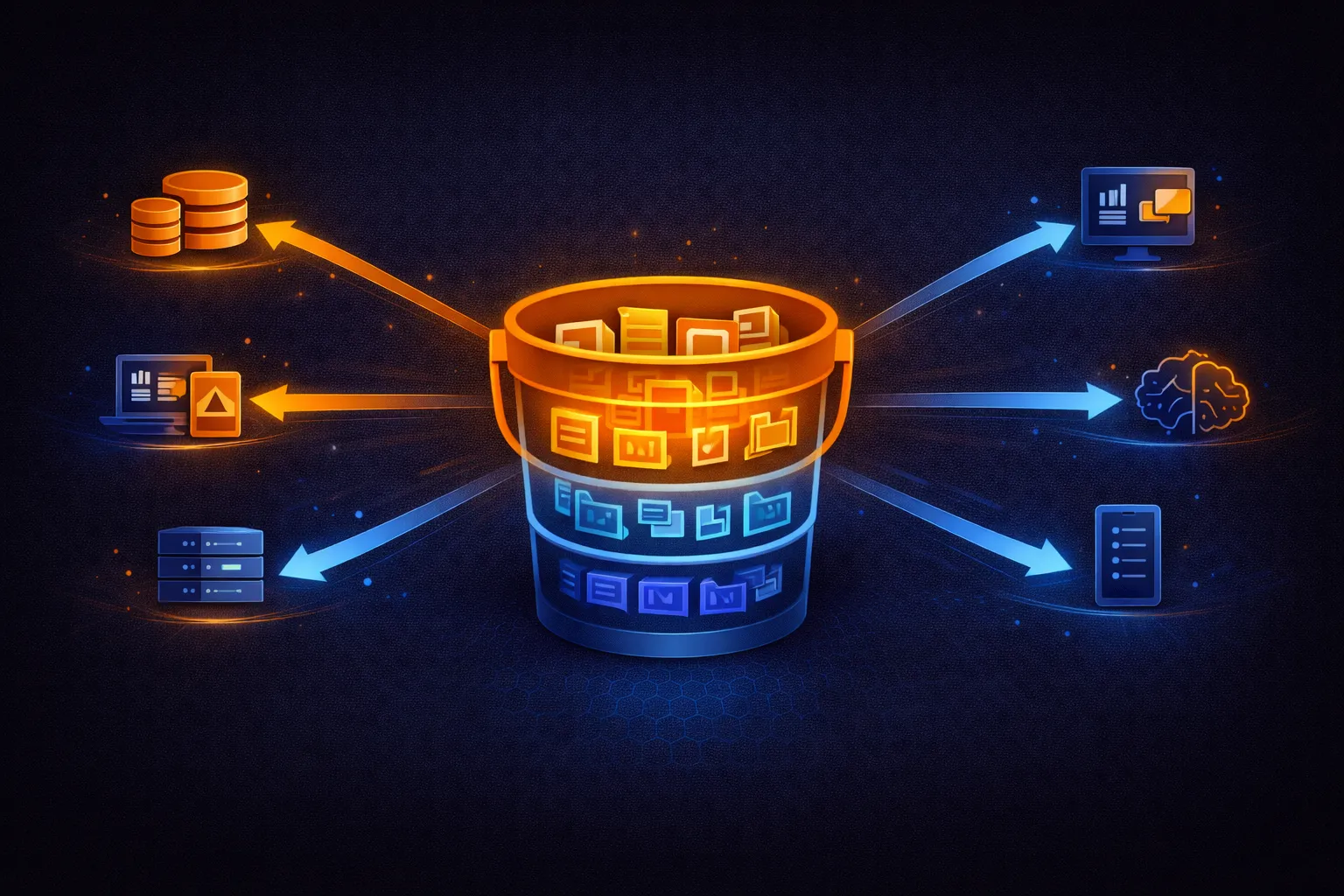

In the previous articles, we covered compute with EC2 and Lambda. Both services need somewhere to store data, and for most AWS workloads that place is Amazon S3 (Simple Storage Service). S3 is an object storage service with virtually unlimited capacity, 99.999999999% (eleven nines) durability, and pay-per-use pricing measured in fractions of a cent per gigabyte.

This article covers S3 from first principles to production patterns: the object model, storage classes, security, encryption, lifecycle management, replication, performance optimization, and the architectural patterns that make S3 the backbone of AWS infrastructure.

What Is S3?

S3 is an object storage service, fundamentally different from block storage (EBS) or file storage (EFS). Objects in S3 are not organized in a file system hierarchy. Instead, each object is identified by a unique key within a flat namespace called a bucket.

- Bucket: A container for objects. Bucket names are globally unique across all AWS accounts. Each bucket exists in a specific AWS region.

- Object: A file plus its metadata. Objects can be from 0 bytes to 5 TB in size.

- Key: The unique identifier for an object within a bucket (e.g.,

photos/2025/vacation.jpg). The slash is part of the key string, not a directory separator — S3 has no directories. - Metadata: Key-value pairs attached to each object. Includes system metadata (Content-Type, Last-Modified) and custom user metadata.

- Tags: Key-value labels for cost allocation, lifecycle policies, and access control. Up to 10 tags per object.

Consistency Model

Since December 2020, S3 provides strong read-after-write consistency for all operations. After a successful PUT or DELETE, any subsequent GET or LIST immediately reflects the change. There is no eventual consistency delay. This applies to all S3 operations including overwrites and deletes.

Working with S3

Bucket Operations

# Create a bucket

aws s3 mb s3://my-company-data-2025

# Create a bucket in a specific region

aws s3api create-bucket \

--bucket my-company-data-2025 \

--region eu-west-1 \

--create-bucket-configuration LocationConstraint=eu-west-1

# List all buckets

aws s3 ls

# List objects in a bucket

aws s3 ls s3://my-company-data-2025/

aws s3 ls s3://my-company-data-2025/ --recursive --human-readable

# Delete an empty bucket

aws s3 rb s3://my-company-data-2025

# Delete a bucket and ALL its contents

aws s3 rb s3://my-company-data-2025 --forceObject Operations

# Upload a file

aws s3 cp report.pdf s3://my-bucket/reports/report.pdf

# Upload with metadata

aws s3 cp report.pdf s3://my-bucket/reports/report.pdf \

--metadata '{"department":"finance","quarter":"Q1"}'

# Download a file

aws s3 cp s3://my-bucket/reports/report.pdf ./downloaded-report.pdf

# Sync a local directory to S3

aws s3 sync ./website s3://my-bucket/static/ --delete

# Sync with exclusions

aws s3 sync ./project s3://my-bucket/backup/ \

--exclude "*.tmp" \

--exclude ".git/*" \

--exclude "node_modules/*"

# Copy between buckets

aws s3 cp s3://source-bucket/data.csv s3://destination-bucket/data.csv

# Move (copy + delete original)

aws s3 mv s3://my-bucket/old-location/file.txt s3://my-bucket/new-location/file.txt

# Delete an object

aws s3 rm s3://my-bucket/reports/old-report.pdf

# Delete all objects with a prefix

aws s3 rm s3://my-bucket/tmp/ --recursivePython SDK (boto3)

import boto3

import json

s3 = boto3.client('s3')

# Upload a file

s3.upload_file('local_file.jpg', 'my-bucket', 'images/photo.jpg')

# Upload with extra arguments

s3.upload_file(

'report.pdf',

'my-bucket',

'reports/2025-Q1.pdf',

ExtraArgs={

'ContentType': 'application/pdf',

'ServerSideEncryption': 'aws:kms',

'Metadata': {'department': 'finance'}

}

)

# Download a file

s3.download_file('my-bucket', 'images/photo.jpg', 'downloaded.jpg')

# Upload from memory (put_object)

data = json.dumps({'users': 150, 'revenue': 45000})

s3.put_object(

Bucket='my-bucket',

Key='metrics/daily.json',

Body=data.encode('utf-8'),

ContentType='application/json'

)

# Read directly from S3

response = s3.get_object(Bucket='my-bucket', Key='metrics/daily.json')

content = json.loads(response['Body'].read().decode('utf-8'))

# List objects with pagination (handles > 1000 objects)

paginator = s3.get_paginator('list_objects_v2')

for page in paginator.paginate(Bucket='my-bucket', Prefix='logs/'):

for obj in page.get('Contents', []):

print(f"{obj['Key']} {obj['Size']} bytes {obj['LastModified']}")

# Check if object exists

try:

s3.head_object(Bucket='my-bucket', Key='data.csv')

print("Object exists")

except s3.exceptions.ClientError as e:

if e.response['Error']['Code'] == '404':

print("Object does not exist")Storage Classes

S3 offers multiple storage classes, each optimized for different access patterns and cost requirements. All classes provide the same eleven nines (99.999999999%) of durability. The difference is in availability, retrieval time, and cost.

# Storage class comparison (us-east-1 pricing):

Storage Class $/GB/month Retrieval Min Duration Availability Use Case

------------------------------------------------------------------------------------------

S3 Standard $0.023 Immediate None 99.99% Frequent access

S3 Intelligent-Tiering $0.023* Immediate None 99.9% Unknown/changing patterns

S3 Standard-IA $0.0125 Immediate 30 days 99.9% Infrequent but fast

S3 One Zone-IA $0.01 Immediate 30 days 99.5% Reproducible infrequent

S3 Glacier Instant $0.004 Immediate 90 days 99.9% Archive, instant access

S3 Glacier Flexible $0.0036 Minutes-hrs 90 days 99.99% Archive, hours OK

S3 Glacier Deep Archive $0.00099 12-48 hours 180 days 99.99% Long-term compliance

S3 Express One Zone $0.16 Single-digit ms 1 hour 99.95% Ultra-low latency

* Intelligent-Tiering: same storage rate per tier, plus $0.0025/1000 objects monitoring fee

IA classes: also charge per-GB retrieval fee ($0.01/GB for Standard-IA)Choosing the Right Storage Class

# Decision guide:

# Accessed multiple times per day?

# YES --> S3 Standard

# Access pattern is unpredictable?

# YES --> S3 Intelligent-Tiering (auto-moves between tiers)

# Accessed less than once per month, but need instant access?

# YES --> S3 Standard-IA (or One Zone-IA if data is reproducible)

# Archival data, need access within milliseconds when requested?

# YES --> S3 Glacier Instant Retrieval

# Archival data, can wait minutes to hours?

# YES --> S3 Glacier Flexible Retrieval

# Compliance/legal archives, rarely if ever accessed?

# YES --> S3 Glacier Deep Archive ($0.00099/GB = ~$1/TB/month)

# Analytics / ML with single-digit millisecond latency?

# YES --> S3 Express One Zone (directory buckets)Setting Storage Class

# Upload with specific storage class

aws s3 cp backup.zip s3://my-bucket/backups/ --storage-class STANDARD_IA

# Change storage class of existing object (creates a copy)

aws s3 cp s3://my-bucket/old-logs.gz s3://my-bucket/old-logs.gz --storage-class GLACIER# Upload with storage class in Python

s3.put_object(

Bucket='my-bucket',

Key='archive/2024-data.tar.gz',

Body=data,

StorageClass='GLACIER'

)

# Available StorageClass values:

# 'STANDARD' - S3 Standard

# 'INTELLIGENT_TIERING' - Intelligent-Tiering

# 'STANDARD_IA' - Standard Infrequent Access

# 'ONEZONE_IA' - One Zone Infrequent Access

# 'GLACIER_IR' - Glacier Instant Retrieval

# 'GLACIER' - Glacier Flexible Retrieval

# 'DEEP_ARCHIVE' - Glacier Deep ArchiveLifecycle Policies

Lifecycle policies automate storage class transitions and object expiration. This is how you optimize costs without manual intervention.

{

"Rules": [

{

"ID": "TransitionAndExpire",

"Status": "Enabled",

"Filter": {

"Prefix": "logs/"

},

"Transitions": [

{

"Days": 30,

"StorageClass": "STANDARD_IA"

},

{

"Days": 90,

"StorageClass": "GLACIER"

},

{

"Days": 365,

"StorageClass": "DEEP_ARCHIVE"

}

],

"Expiration": {

"Days": 2555

}

},

{

"ID": "CleanupIncompleteUploads",

"Status": "Enabled",

"Filter": {},

"AbortIncompleteMultipartUpload": {

"DaysAfterInitiation": 7

}

},

{

"ID": "ExpireOldVersions",

"Status": "Enabled",

"Filter": {},

"NoncurrentVersionTransitions": [

{

"NoncurrentDays": 30,

"StorageClass": "STANDARD_IA"

},

{

"NoncurrentDays": 90,

"StorageClass": "GLACIER"

}

],

"NoncurrentVersionExpiration": {

"NoncurrentDays": 365

}

}

]

}# Apply lifecycle configuration

aws s3api put-bucket-lifecycle-configuration \

--bucket my-bucket \

--lifecycle-configuration file://lifecycle.json

# View current lifecycle rules

aws s3api get-bucket-lifecycle-configuration --bucket my-bucketThe AbortIncompleteMultipartUpload rule is important: incomplete multipart uploads accumulate silently and you get charged for the stored parts. Always include this rule.

Versioning

Versioning preserves every version of every object in the bucket. When you overwrite or delete an object, the previous versions remain accessible.

# Enable versioning

aws s3api put-bucket-versioning \

--bucket my-bucket \

--versioning-configuration Status=Enabled

# List all versions of an object

aws s3api list-object-versions \

--bucket my-bucket \

--prefix reports/quarterly.pdf

# Download a specific version

aws s3api get-object \

--bucket my-bucket \

--key reports/quarterly.pdf \

--version-id "abc123def456" \

restored-file.pdf

# "Delete" an object (adds a delete marker, previous versions remain)

aws s3 rm s3://my-bucket/reports/quarterly.pdf

# Permanently delete a specific version

aws s3api delete-object \

--bucket my-bucket \

--key reports/quarterly.pdf \

--version-id "abc123def456"With versioning enabled, a standard DELETE does not remove data. It inserts a delete marker that hides the current version. All previous versions remain and continue to incur storage costs. Use lifecycle policies to expire old versions automatically.

Security

Block Public Access

Block Public Access is an account-level and bucket-level control that overrides any policy or ACL that would grant public access. Enable it unless you specifically need public access (static website hosting, public datasets).

# Enable Block Public Access on a bucket (all four settings)

aws s3api put-public-access-block \

--bucket my-bucket \

--public-access-block-configuration \

BlockPublicAcls=true,IgnorePublicAcls=true,BlockPublicPolicy=true,RestrictPublicBuckets=true

# Enable at the account level (applies to all buckets)

aws s3control put-public-access-block \

--account-id 123456789012 \

--public-access-block-configuration \

BlockPublicAcls=true,IgnorePublicAcls=true,BlockPublicPolicy=true,RestrictPublicBuckets=trueBucket Policies

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "EnforceHTTPS",

"Effect": "Deny",

"Principal": "*",

"Action": "s3:*",

"Resource": [

"arn:aws:s3:::my-bucket",

"arn:aws:s3:::my-bucket/*"

],

"Condition": {

"Bool": {

"aws:SecureTransport": "false"

}

}

},

{

"Sid": "EnforceEncryption",

"Effect": "Deny",

"Principal": "*",

"Action": "s3:PutObject",

"Resource": "arn:aws:s3:::my-bucket/*",

"Condition": {

"StringNotEquals": {

"s3:x-amz-server-side-encryption": "aws:kms"

}

}

},

{

"Sid": "RestrictToVPC",

"Effect": "Deny",

"Principal": "*",

"Action": "s3:*",

"Resource": [

"arn:aws:s3:::my-bucket",

"arn:aws:s3:::my-bucket/*"

],

"Condition": {

"StringNotEquals": {

"aws:sourceVpce": "vpce-1234567890abcdef0"

}

}

}

]

}# Apply bucket policy

aws s3api put-bucket-policy \

--bucket my-bucket \

--policy file://bucket-policy.jsonPre-Signed URLs

Pre-signed URLs grant temporary access to private objects without changing bucket permissions. The URL includes a signature and expiration time.

# Generate a pre-signed download URL (expires in 1 hour)

url = s3.generate_presigned_url(

'get_object',

Params={'Bucket': 'my-bucket', 'Key': 'reports/confidential.pdf'},

ExpiresIn=3600

)

# Share this URL -- anyone with it can download the file until it expires

# Generate a pre-signed upload URL

url = s3.generate_presigned_url(

'put_object',

Params={

'Bucket': 'my-bucket',

'Key': 'uploads/user-file.pdf',

'ContentType': 'application/pdf'

},

ExpiresIn=600 # 10 minutes to upload

)

# Client can PUT to this URL without AWS credentials# Generate pre-signed URL with CLI

aws s3 presign s3://my-bucket/reports/confidential.pdf --expires-in 3600Encryption

S3 supports three server-side encryption options. All encrypt data at rest.

# Encryption types:

# SSE-S3 (default since Jan 2023)

# - AWS manages the key entirely

# - AES-256 encryption

# - No additional cost

# - No audit trail of key usage

# SSE-KMS

# - Uses AWS KMS keys (AWS managed or customer managed)

# - Provides audit trail via CloudTrail

# - Supports key rotation and access policies

# - $0.03 per 10,000 requests to KMS

# - Recommended for regulated workloads

# SSE-C (Customer-Provided Keys)

# - You provide the encryption key with every request

# - AWS does not store the key

# - You are responsible for key management

# - Use case: strict key control requirements# Enable default SSE-KMS encryption on a bucket

aws s3api put-bucket-encryption \

--bucket my-bucket \

--server-side-encryption-configuration '{

"Rules": [{

"ApplyServerSideEncryptionByDefault": {

"SSEAlgorithm": "aws:kms",

"KMSMasterKeyID": "arn:aws:kms:us-east-1:123456789012:key/my-key-id"

},

"BucketKeyEnabled": true

}]

}'

# BucketKeyEnabled reduces KMS API calls (and costs) by using a

# bucket-level key derived from the KMS key# Upload with SSE-KMS encryption

s3.put_object(

Bucket='my-bucket',

Key='sensitive/data.json',

Body=json.dumps(data),

ServerSideEncryption='aws:kms',

SSEKMSKeyId='arn:aws:kms:us-east-1:123456789012:key/my-key-id'

)Replication

S3 replication copies objects automatically between buckets. Use it for disaster recovery, compliance (data in multiple regions), or latency reduction.

# Cross-Region Replication (CRR): between buckets in different regions

# Same-Region Replication (SRR): between buckets in the same region

# Prerequisites:

# 1. Versioning must be enabled on BOTH source and destination buckets

# 2. An IAM role that allows S3 to replicate objects

# Enable versioning on both buckets

aws s3api put-bucket-versioning \

--bucket source-bucket \

--versioning-configuration Status=Enabled

aws s3api put-bucket-versioning \

--bucket destination-bucket \

--versioning-configuration Status=Enabled{

"Role": "arn:aws:iam::123456789012:role/s3-replication-role",

"Rules": [

{

"ID": "ReplicateAll",

"Status": "Enabled",

"Filter": {},

"Destination": {

"Bucket": "arn:aws:s3:::destination-bucket",

"StorageClass": "STANDARD_IA",

"EncryptionConfiguration": {

"ReplicaKmsKeyID": "arn:aws:kms:eu-west-1:123456789012:key/dest-key-id"

}

},

"DeleteMarkerReplication": {

"Status": "Enabled"

},

"SourceSelectionCriteria": {

"SseKmsEncryptedObjects": {

"Status": "Enabled"

}

}

}

]

}# Apply replication configuration

aws s3api put-bucket-replication \

--bucket source-bucket \

--replication-configuration file://replication.jsonPerformance Optimization

Multipart Upload

For objects larger than 100 MB, use multipart upload. It splits the file into parts, uploads them in parallel, and assembles them on S3. Required for objects larger than 5 GB.

from boto3.s3.transfer import TransferConfig

# Configure multipart upload

config = TransferConfig(

multipart_threshold=100 * 1024 * 1024, # 100 MB: use multipart above this

max_concurrency=10, # 10 parallel upload threads

multipart_chunksize=100 * 1024 * 1024, # 100 MB per part

use_threads=True

)

# Upload with multipart (boto3 handles it automatically with TransferConfig)

s3.upload_file(

'large-dataset.tar.gz',

'my-bucket',

'datasets/large-dataset.tar.gz',

Config=config

)# Manual multipart upload with CLI (automatic for large files)

# The CLI automatically uses multipart for files > 8 MB

# Configure CLI multipart settings

aws configure set default.s3.multipart_threshold 100MB

aws configure set default.s3.multipart_chunksize 100MB

# Upload a large file (multipart happens automatically)

aws s3 cp large-file.tar.gz s3://my-bucket/Transfer Acceleration

Transfer Acceleration uses CloudFront edge locations to speed up uploads from distant clients. Data is routed from the nearest edge to S3 over the optimized AWS backbone network.

# Enable Transfer Acceleration on a bucket

aws s3api put-bucket-accelerate-configuration \

--bucket my-bucket \

--accelerate-configuration Status=Enabled

# Upload using the accelerated endpoint

aws s3 cp large-file.zip s3://my-bucket/ --endpoint-url https://my-bucket.s3-accelerate.amazonaws.com

# Additional cost: $0.04/GB on top of standard transfer pricing

# Worth it for cross-continent uploads where it can improve speed 50-500%S3 Request Performance

# S3 supports:

# - 5,500 GET/HEAD requests per second per prefix

# - 3,500 PUT/COPY/POST/DELETE requests per second per prefix

#

# A "prefix" is the path up to the last slash:

# s3://bucket/images/2025/photo.jpg -- prefix is "images/2025/"

#

# To increase throughput, distribute objects across multiple prefixes:

# LOW throughput (all objects under one prefix):

s3://bucket/data/file-001.csv

s3://bucket/data/file-002.csv

s3://bucket/data/file-003.csv

# Max: 5,500 GETs/s for all files combined

# HIGH throughput (distributed across prefixes):

s3://bucket/data/a/file-001.csv

s3://bucket/data/b/file-002.csv

s3://bucket/data/c/file-003.csv

# Max: 5,500 GETs/s PER prefix = 16,500 GETs/s totalStatic Website Hosting

# Enable static website hosting

aws s3 website s3://my-website-bucket/ \

--index-document index.html \

--error-document error.html

# Upload website files

aws s3 sync ./build s3://my-website-bucket/ --delete

# The website is accessible at:

# http://my-website-bucket.s3-website-us-east-1.amazonaws.com

# For HTTPS and custom domain, put CloudFront in front:

# CloudFront distribution --> S3 bucket (Origin Access Control)

# This also adds caching and global edge distributionFor production websites, always use CloudFront in front of S3. Direct S3 website endpoints only support HTTP, not HTTPS. CloudFront adds HTTPS, caching, custom domain support, and reduced latency via edge locations.

Event Notifications

S3 can trigger Lambda functions, SQS queues, or SNS topics when objects are created, deleted, or restored.

# Common event types:

# s3:ObjectCreated:* -- any upload

# s3:ObjectCreated:Put -- simple PUT upload

# s3:ObjectCreated:Post -- POST upload

# s3:ObjectCreated:CompleteMultipartUpload

# s3:ObjectRemoved:* -- any delete

# s3:ObjectRestore:Completed -- Glacier restore completed{

"LambdaFunctionConfigurations": [

{

"Id": "ProcessUploads",

"LambdaFunctionArn": "arn:aws:lambda:us-east-1:123456789012:function:process-upload",

"Events": ["s3:ObjectCreated:*"],

"Filter": {

"Key": {

"FilterRules": [

{"Name": "prefix", "Value": "uploads/"},

{"Name": "suffix", "Value": ".jpg"}

]

}

}

}

],

"QueueConfigurations": [

{

"Id": "LogDeletes",

"QueueArn": "arn:aws:sqs:us-east-1:123456789012:delete-log-queue",

"Events": ["s3:ObjectRemoved:*"]

}

]

}aws s3api put-bucket-notification-configuration \

--bucket my-bucket \

--notification-configuration file://notifications.jsonS3 Select and Athena

Instead of downloading an entire object to process it, S3 Select lets you query CSV, JSON, or Parquet files in place using SQL expressions. S3 returns only the matching rows.

# S3 Select: query a CSV file without downloading it

response = s3.select_object_content(

Bucket='my-bucket',

Key='data/sales-2025.csv',

ExpressionType='SQL',

Expression="SELECT product, revenue FROM s3object WHERE revenue > 10000",

InputSerialization={

'CSV': {'FileHeaderInfo': 'USE', 'RecordDelimiter': '\n', 'FieldDelimiter': ','}

},

OutputSerialization={'JSON': {}}

)

# Process results

for event in response['Payload']:

if 'Records' in event:

print(event['Records']['Payload'].decode('utf-8'))For complex queries across many objects, use Amazon Athena. Athena runs SQL queries directly on S3 data without loading it into a database. You pay $5 per TB scanned. Use columnar formats (Parquet, ORC) and partitioning to reduce scan costs.

Object Lock (WORM)

Object Lock enforces write-once-read-many (WORM) protection. Once locked, an object version cannot be deleted or overwritten for a specified retention period. Required for regulatory compliance (SEC Rule 17a-4, FINRA, HIPAA).

# Enable Object Lock (must be done at bucket creation)

aws s3api create-bucket \

--bucket compliance-bucket \

--object-lock-enabled-for-bucket

# Set default retention policy

aws s3api put-object-lock-configuration \

--bucket compliance-bucket \

--object-lock-configuration '{

"ObjectLockEnabled": "Enabled",

"Rule": {

"DefaultRetention": {

"Mode": "COMPLIANCE",

"Years": 7

}

}

}'

# Retention modes:

# GOVERNANCE -- users with special permissions can override

# COMPLIANCE -- nobody can delete or shorten retention, not even rootS3 Inventory

For large buckets with millions of objects, LIST operations are slow and expensive. S3 Inventory generates CSV or Parquet reports of all objects in a bucket on a daily or weekly schedule.

# Configure S3 Inventory

aws s3api put-bucket-inventory-configuration \

--bucket my-bucket \

--id weekly-inventory \

--inventory-configuration '{

"Destination": {

"S3BucketDestination": {

"Bucket": "arn:aws:s3:::inventory-bucket",

"Format": "Parquet",

"Prefix": "inventory"

}

},

"IsEnabled": true,

"Id": "weekly-inventory",

"IncludedObjectVersions": "Current",

"OptionalFields": [

"Size", "LastModifiedDate", "StorageClass",

"EncryptionStatus", "IntelligentTieringAccessTier"

],

"Schedule": {"Frequency": "Weekly"}

}'

# Query the inventory with Athena to find optimization opportunities:

# - Large objects in expensive storage classes

# - Objects that could be archived

# - Unencrypted objectsS3 Pricing

# Storage pricing (us-east-1, per GB/month):

S3 Standard $0.023

S3 Standard-IA $0.0125

S3 One Zone-IA $0.01

S3 Glacier Instant $0.004

S3 Glacier Flexible $0.0036

S3 Glacier Deep Archive $0.00099

# Request pricing (per 1,000 requests):

PUT/COPY/POST/LIST GET/SELECT

S3 Standard $0.005 $0.0004

S3 Standard-IA $0.01 $0.001

S3 Glacier Flexible $0.05 $0.0004

S3 Glacier Deep $0.05 $0.0004

# Data transfer:

Upload to S3: FREE

Transfer OUT to internet: $0.09/GB (first 10 TB/month)

Transfer to CloudFront: FREE

Transfer to same region: FREE

Transfer to other region: $0.02/GB

# Retrieval fees (per GB):

S3 Standard-IA: $0.01

S3 One Zone-IA: $0.01

Glacier Instant: $0.03

Glacier Flexible: $0.01 (Expedited: $0.03, Bulk: $0.0025)

Glacier Deep: $0.02 (Bulk: $0.0025)Cost Optimization Tips

- Use lifecycle policies to transition data to cheaper storage classes automatically

- Enable S3 Intelligent-Tiering for data with unknown access patterns (no retrieval fees)

- Always add a lifecycle rule to abort incomplete multipart uploads

- Use S3 Inventory + Athena to audit storage and find optimization opportunities

- Put CloudFront in front of S3 to reduce data transfer costs and request costs

- Use S3 Select instead of downloading entire objects when you only need specific rows

- Enable S3 Storage Lens for account-wide visibility into storage usage and activity

- Use VPC Gateway Endpoints for S3 access from EC2 — free data transfer, no NAT Gateway costs

Best Practices Summary

Security

- Enable Block Public Access at the account level

- Enable default encryption (SSE-KMS for regulated data, SSE-S3 for everything else)

- Use bucket policies to enforce HTTPS-only access

- Use pre-signed URLs for temporary access instead of making objects public

- Enable CloudTrail data events for S3 to audit all object-level access

- Use S3 Access Points to manage access for different applications separately

Data Protection

- Enable versioning on all buckets containing important data

- Use Object Lock for compliance data that must not be deleted

- Configure cross-region replication for disaster recovery

- Use lifecycle policies to manage version expiration and avoid runaway storage costs

Performance

- Use multipart upload for files larger than 100 MB

- Distribute reads across multiple prefixes for high-throughput workloads

- Use Transfer Acceleration for cross-continent uploads

- Use byte-range fetches to download parts of large objects in parallel

- Use S3 Express One Zone for workloads requiring single-digit millisecond latency

Go Deeper: The State of AWS Security 2026

This article is just the start. Get the full picture with our free whitepaper - 8 chapters covering IAM, S3, VPC, monitoring, agentic AI security, compliance, and a prioritized action plan with 50+ CLI commands.

More Articles

AWS Architecture Patterns: Proven Blueprints for Scalable Cloud Applications

Six production-proven AWS architecture patterns: three-tier web apps, serverless APIs, event-driven processing, static websites, data lakes, and multi-region disaster recovery with diagrams and implementation guides.

AWS Cost Optimization: Reduce Your Cloud Bill Without Sacrificing Performance

Complete guide to AWS cost optimization covering Cost Explorer, Compute Optimizer, Savings Plans, Spot Instances, S3 lifecycle policies, gp2 to gp3 migration, scheduling, budgets, and production best practices.

AWS AI and ML Services: Add Intelligence to Your Applications

Complete guide to AWS AI services including Rekognition, Comprehend, Textract, Polly, Translate, Transcribe, and Bedrock with CLI commands, pricing, and production best practices.